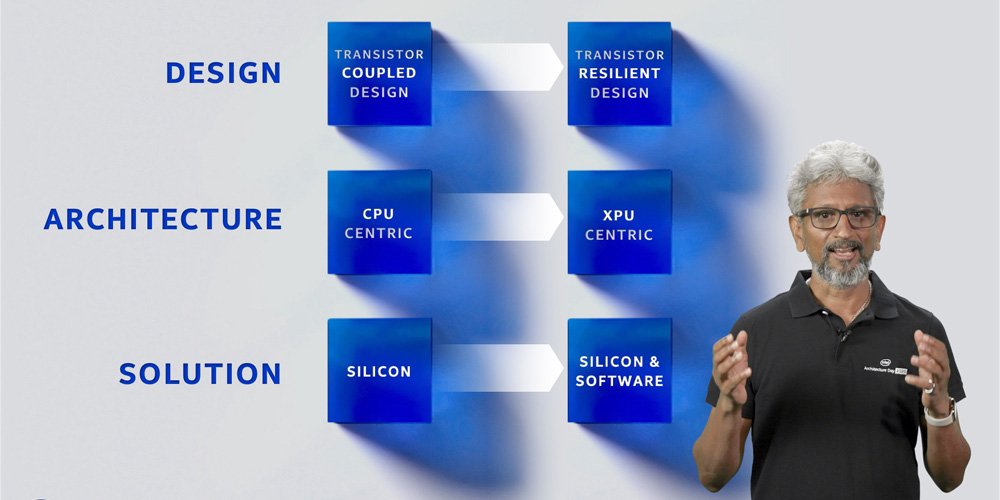

Intel presented their Blueprint for the future at The Intel Architecture Day 2020. Like the Architecture day 2018, they focused all of their future innovations based on their “Six Technology Pillars”. The Six Technology Pillars are Process and Packaging, XPU Architecture, Memory, Interconnect, Security and the sixth is Software.

Intel revealed a lot of information regarding their upcoming CPU cores, every architecture variants for the Xe GPUs, memory advancements with Optane Persistent Memory, efforts on the software front with the help of OneAPI program and the process technology improvements. Let’s walk through briefly over the announcements.

PROCESS AND PACKAGING UPDATED

It’s a well-known fact that Intel took on a fairly aspiring undertaking with their 10nm and 7nm process nodes. And in doing as such, they’ve needed to try a great deal with the semiconductor process, lithography and material innovation that went into every one of their forthcoming process nodes. Intel stated that they were moving far away from the absurdity of attaching extra plusses (14nm++++) each time an improvement was made to the process innovation. Instead, they will now be utilizing more elucidating terms and telling the world more about the enhancements made. Superfin is the initial phase in this new “descriptive” course. Intel’s up and coming 10nm process will presently be alluded to as Intel 10nm Superfin.

Key upgrades incorporate improving the transistor gate pitch to permit higher drive current. Upgraded epitaxial source/channel to decrease resistance and lower strain over time, in conclusion, the gate process has likewise been improved to help with channel portability. At a large scale level, they’ve presented the Super MIM Capacitor which is essentially a stacked grid of slim layers of various Hi-K materials, every one of which is only a couple of angstroms thick.

An angstrom is one hundred-millionth of a centimetre. Basically, by utilizing distinctive Hi-K materials they fabricate a capacitor and the stacking helps increment the capacitance of the whole structure. Another improvement is with the diminished obstruction of the vias inside the stack.

The entirety of the above upgrades had to do with the individual chips. Zooming out to a much bigger scope lies the test of interconnectors for connecting chips together. Intel utilizes a few kinds of interconnects to empower this. The latest of which is Foveros which helped make their most recent Lakefield CPUs occur. Lakefield interconnects had a bump pitch of between 50-25 microns and their future interconnects are required to have a knock pitch of under 10 microns. They’ve additionally taped out chips utilizing hybrid interconnects which utilize various interconnect innovations, for example, Co-EMIB and ODI(Omni-Directional Interconnect).

Each of these interconnects are appropriate for an alternate use case. Some work with empowering the stacking of a littler package onto a larger package. Though different interconnects help with the situation involving a larger package being stacked onto a smaller one. These upgrades will help Intel integrate more chiplets inside a littler impression.

XPU HETEROGENEOUS ARCHITECTURE AND TIGER LAKE

The effect of a CPU microarchitecture is subject to its generality, for example, the number of various applications would you be able to execute utilizing a specific architecture. Intel has a differing portfolio of silicon which is spread across CPUs, GPUs, AI accelerators and FPGAs. Each of these clings to a specific architecture area.

CPUs are scalar, GPUs are vector, AI accelerators are Matrix and FPGAs are spatial. These are completely characterized based on their typical workloads. For instance, CPUs are generic, GPUs are exceptionally parallelised, AI accelerators need to deal with a great Matrix match and FPGAs are utilized for exceptionally cutting edge algorithms that other domains aren’t as capable at handling.

Intel then moved into expressing their objectives for Tiger Lake, their up and coming processor family. To sum up, Intel needed a generational jump in performance with troublesome graphics, adaptable AI, expanded memory bandwidth and guarantee data security. We’ve just secured the packaging and process perspective.

Going to the performance factor, Tiger Lake will utilize Willow Cove which is based upon their Sunny Cove cores that have been updated to have a bigger non-comprehensive cache and with security, upgrades to ensure against return or jump oriented attacks. Intel states that they have accomplished enormous frequency gains while keeping up power efficiency. The net outcome is that they have a more dynamic and unique V-F curve to play with.

For graphics, Intel had just shown the “Horseshoe Bend” idea not long ago at CES 2020 which had Xe Graphics. Since Xe is a wide architecture, Intel has distinctive architecture variations for each focus region. With CPUs, they’re utilizing Xe-LP architecture and in Tiger Lake, we can expect up to 96 Execution Units with 3.8 MB of L3 cache.

Tiger Lake will bolster LP4x-4267 and DDR4-3200 memory and up to LP5-5400 memory for when we have business packs accessible. Generally speaking, the complete memory bandwidth would be around 86 GB/s and Tiger Lake will have two memory regulators on the package.

Since Willow Cove expands upon Sunny Cove, we have AI capacities worked in also. Intel calls this the Gaussian and Neural Accelerator (GNA 2.0). The key improvement here is that it is upgraded for low-power neural inference and furthermore devours less CPU figure assets.

INTEL XE GPU ANNOUNCED AT INTEL ARCHITECTURE DAY 2020

We originally knew about Xe with regards to Ponte Vecchio, Intel’s enterprise-grade GPU for data centres. As leaks around a desktop variant started surfacing began surfacing, we were made mindful of Xe being utilized for consumer graphics also. Also, at CES 2020, we saw the primary models of thin and light note pads with Intel Xe graphics. Fundamentally, Intel has taken Xe and stretched it into variants which are appropriate for data centres, accelerators, fan gamers and for integrated graphics. The variant for integrated graphics is XeLP while the one for gamers is named XeHPG. Xe is in fact, Intel’s Gen12 graphics engine.

For XeLP, Intel set out with an objective of 1.5x regarding the performance targets. Contrasted with Gen11 on Ice Lake which had a limit of 64 Execution Units (EUs), Xe will have up to 96 EUs and the new Superfin transistor will help separate enormous frequency gains while holding power efficiency. So regarding pure EU counts, Intel has gone 1.5x over Gen11. The Gen11 IGP inside Ice Lake 1065G7 can scale up to 1.1 GHz and the V-F chart exhibited by Intel shows that it can work over a bigger voltage range and highlight a maximum frequency in abundance of 1.7 GHz. Generally speaking, Intel states that XeLP will highlight 2x the performance of Gen11 graphics.

Xe is a ground up re-architected graphics framework so it includes a considerable amount of contrasts with the EU too. In Gen11, Intel had two four-wide ALUs for FP/INT and FP/EM workloads. Generally, these were 8 ALUs working on various workloads. In XeLP, Intel is tossing in two extra ALUs and the design has now been changed with the goal that eight ALUs are utilized for FP/INT and two for FP/EM. Intel is revising the innards of the EUs to adjust to the changing workloads and given the way that a portion of their expansions have gotten more efficient at handling specific workloads. XeLP adequately has 2x the INT math capacities because of the multiplying of the FP/INT ALUs from 4 to 8. Likewise, the FP/EM ALUs now work autonomously from the FP/INT ALUs so the FP/INT ALUs don’t need to sit while waiting for the FP/EM ALUs to complete their workloads at hand. This has expanded the control logic required to deal with the FP/EM ALUs yet the general effect of this compromise is a more efficient graphics pipeline.

Going to the memory subsystem, Xe-LP presently has another L1 cache that is free of what the rest of the CPU utilizes and the L3 cache has been knock up to 16 MB. Another improvement is that the compression codecs are currently stretched out to the media and display interfaces. Intel then exhibited gaming performance on Xe-LP with games, for example, Battlefield V, PUBG and DOOM Eternal.

In conclusion, Intel presented Xe-HPG, a variant of Xe for High Performance Gaming. Xe-HPG will likewise have support for Hardware-Accelerated Ray Tracing. Intel didn’t make reference to if Xe-HPG will be utilized to fabricate discrete graphics cards to contend with the semblance of NVIDIA and AMD. Taking into account that Xe-LP is now centered around integrated graphics, we could without much of a stretch expect that discrete graphics are in transit. Intel stated that Xe-LP will be releasing out in 2020 and the remainder of the Xe family including the HPC, HP and HPG variations will be out in 2021.

SECOND GEN 3D-XPOINT OPTANE MEMORY AND SSDS

Intel had revealed their first gen Optane DC Persistent Memory Modules (DCPMM) in 2017 and they were a monstrous jump in memory capacity with respect to data centres. Enough to include another storage level in the memory heirarchy. At Architecture Day 2020, Intel revealed the upcoming gen of Optane DCPMM as Barlow Pass and for SSDs, they have Alder Stream.

With the second gen Optane memory, Intel is picking a 4-layer stack which basically twofold density along the vertical axis. Beside thickness gains, Intel is likewise preparing these new Optane devices with improved memory regulators that can bolster PCIe 4.0. Barlow Pass guarantees a 25% addition in performance over the main gen Apache Pass modules.

The new SSDs will be releasing soon and the Barlow Pass DCPMM modules have already available at the market.

INTEL ONEAPI GOES GOLD

OneAPI, Intel’s unified programming model which was disclosed at Architecture Day 2018 is currently set to go gold in the second-50% of 2020. OneAPI is a far-reaching stack of compilers, libraries, migration devices and analysis software that is intended to run into the length and broadness of Intel’s hardware portfolio, henceforth, “unified”.

2 Comments

Pingback: Intel's Ryzen Killer: Alder Lake Hybrid Processors Deliver the biggest efficiency leap in 14 years. - Craffic

Pingback: Intel announces its latest 11th Gen Tiger Lake CPUs, available this fall on laptops - Craffic