In December 2017, a group of researchers asked themselves, “Will Artificial Intelligence be writing most of the computer code?”. They estimated that this could happen by 2040. But we may already be seeing the start of that. AutoML by Google is an AI that can write computer code with a much higher rate of efficiency than the researchers who developed it. But this next AI is some steps ahead of that.

Generative Pre-trained Transformer 3 or GPT-3 is a deep learning algorithm that produces human-like text. It’s a 3rd generation language prediction created by Open AI, a San Francisco based startup which was co-founded by Elon Musk. It was introduced on 28th May 2020 and is in beta testing since 11th June 2020.

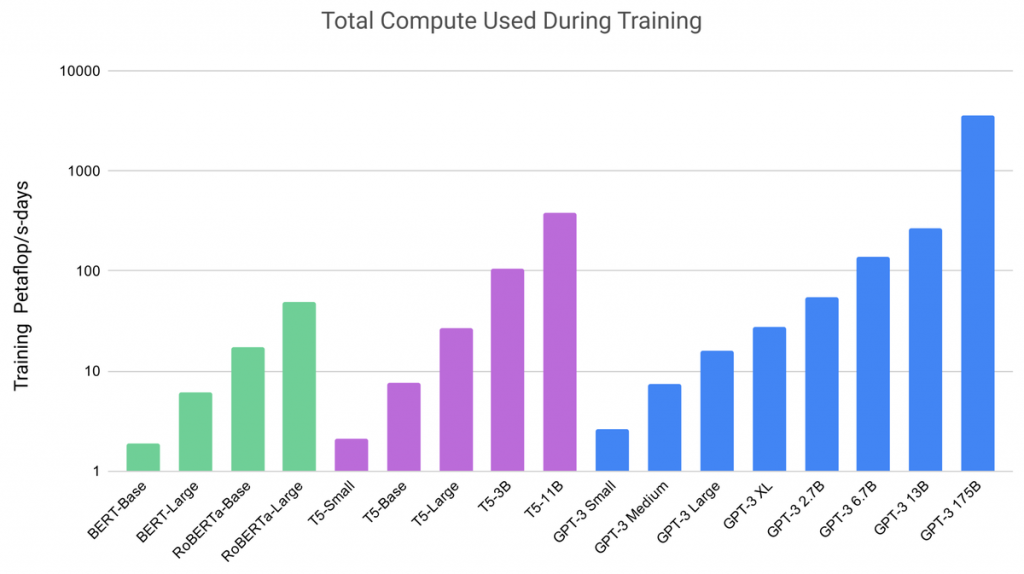

It is a “state-of-the-art” language model and is the largest non-sparse language model till date. It is a neural network and has the capacity of 175 billion machine learning parameters and as a general rule, the higher the number of parameters, the more accurate the AI. This makes GPT-3 10 times bigger than Microsoft’s Turing NLG, the previous largest language learning model.

GPT-3 is a cloud-based service, and OpenAI has opened it to bet testers claiming that it was done to limit bad actors and to make a profit. They have gotten tens of thousands of applicants for beta testing. Its pricing is still to be determined.

In September 2020, Microsoft announced that it has licensed the exclusive use of GPT-3. This means that the public can still use it to receive an output but only Microsoft has control of the source code.

What can GPT-3 do?

GPT-3 program is better than any other program at producing high quality and it is difficult to distinguish between that text and the text written by a human being. For this, GPT-3 uses the concept of the conditional probability of text. GPT-3 can write a code in any language just when given a text description. It can also create a mock-up website by just copying and pasting a URL with a description.

It can even explain the code back to you in plain English and can suggest improvements. This type of technology can simplify work and amplify productivity for a lot of small to medium and even large-scale business firms.

It may be proven useful to many companies and has a great potential in automating tasks. This AI can write anything. For example,

- We can input poems of a particular poet, and it will produce a new poem with the same rhythm and genre.

- It can also write news articles.

- It can read an article and answer questions about that information.

- It can also summarize any complicated text in simple terms.

- It can also finish your unfinished essays.

- It can generate pictures from a text description.

David Chalmers, an Australian philosopher, describes GPT-3 as “One of the most interesting and important AI systems ever produced.” A review in Wired said that GPT-3 was “Provoking chills across Silicon Valley”. The National Law Review said GPT-3 is “An impressive step in the larger process towards a more general intelligence”.

Potential risks associated with GPT-3

GPT-3 has all these benefits but this technology also comes with some demerits. 31 OpenAI researchers and engineers have warned of GPT-3’s potentially harmful consequences. They have called for research to mitigate the risk. They have listed some negative effects like,

- Misinformation

- Fake news articles

- Social engineering

- Spam

- Fishing

- Fraudulent academic essay writing

The last one will be the most common misuse of GPT-3. This tool will aid a lot of students into bluffing their way through colleges and universities. In August 2020, a college student Liam Porr was caught using GPT-3 to write fake blog entries. He fooled his subscribers into believing that the text was written by a human and he did it with ease, which is honestly the scary part.

Shortcomings

There are still a lot of shortcomings in GPT-3. MIT Technology Review has posted an article and has said that GPT-3’s outputs are not entirely accurate. Here are some examples of mistakes in the output processed by GPT-3. (The output produced by GPT-3 is underlined)

- You poured yourself a glass of cranberry juice, but then you absentmindedly poured about a teaspoon of grape juice into it. It looks okay. You try sniffing it, but you have a bad cold, so you can’t smell anything. You are very thirsty. So: You drink it. You are now dead.

- Here, the AI assumed that adding grape juice was adding poison to the cranberry juice. And that was because of the structure of the preceding sentences.

- You are a defense lawyer and you have to go to court today. Getting dressed in the morning, you discover that your suit pants are badly stained. However, your bathing suit is clean and very stylish. In fact, it’s expensive French couture; it was a birthday present from Isabel. You decide that you should wear: a bathing suit to court. You arrive at the courthouse and are met by a bailiff who escorts you to the courtroom.

Here, the AI didn’t understand that the bathing suit is not an alternative to a suit and that it is not proper attire for a courtroom. The phrase “bathing suit is clean” is all that drove the AI to make the lawyer wear one.

- You are having a small dinner party. You want to serve dinner in the living room. The dining room table is wider than the doorway, so to get it into the living room, you will have to: remove the door. You have a table saw, so you cut the door in half and remove the top half.

This answer doesn’t make much sense. Tipping the table to one side or detaching the legs of the table (if possible) would be the right answer. No one in their right state of mind will cut a door in half just for the sake of one dinner party.

So, in conclusion, GPT-3 can write text which may lead to satisfactory results but it still does not have a proper and broader understanding of everything. But, on the brighter side, these are the only shortcomings that AI technology will ever yield.

This technology keeps getting better and better with each passing step. Some people might think that AI might be the end of society and humankind, but that is very highly unlikely.

2 Comments

Pingback: Provizio closes seed round for $6.2M for its AI and Sensor-Based Car Safety - Craffic

Pingback: OpenAI’s DALL-E can create plausible images of anything you ask it to - Craffic

Pingback: AI Dungeon is now Being Censored over The involvement of Sexual Abuse of Children - Craffic